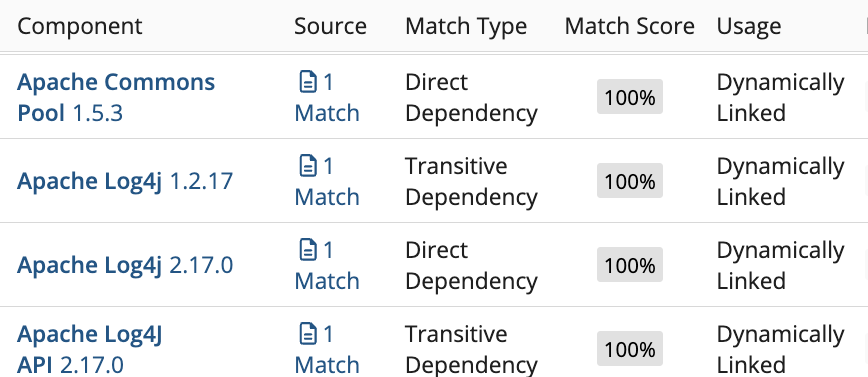

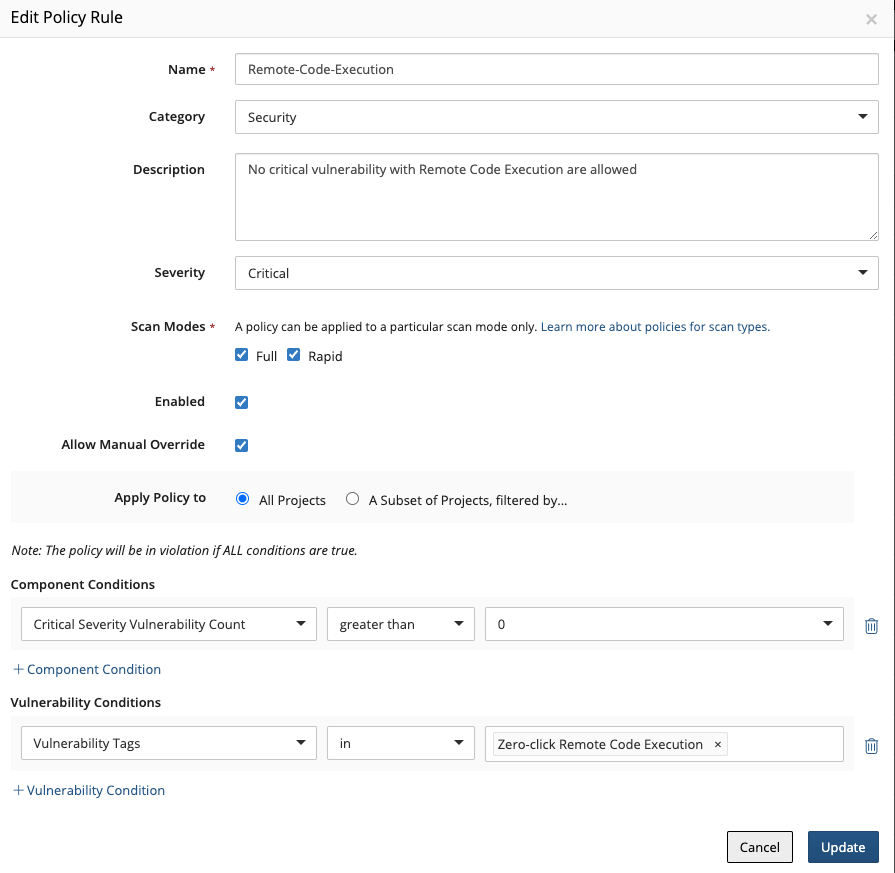

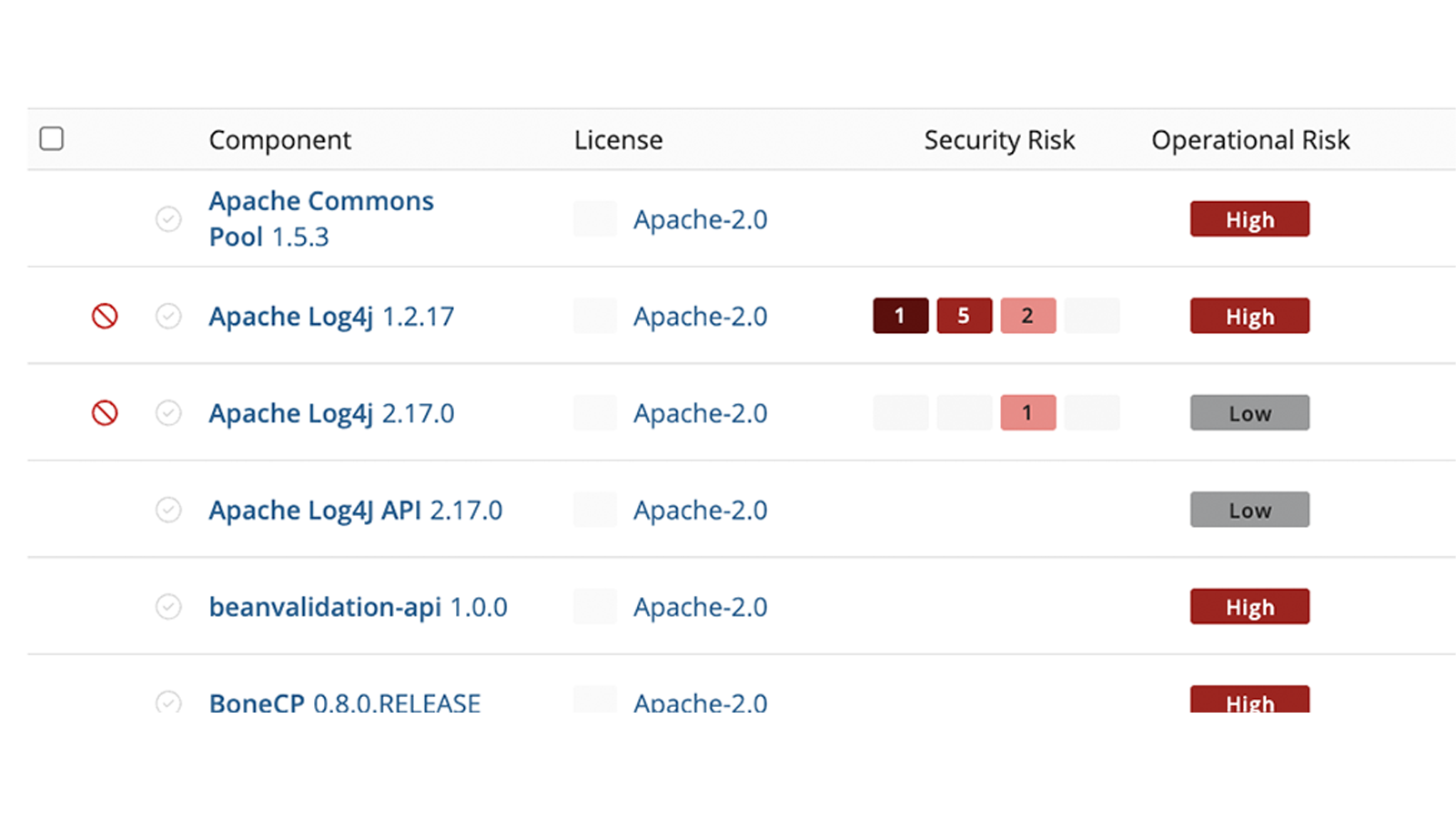

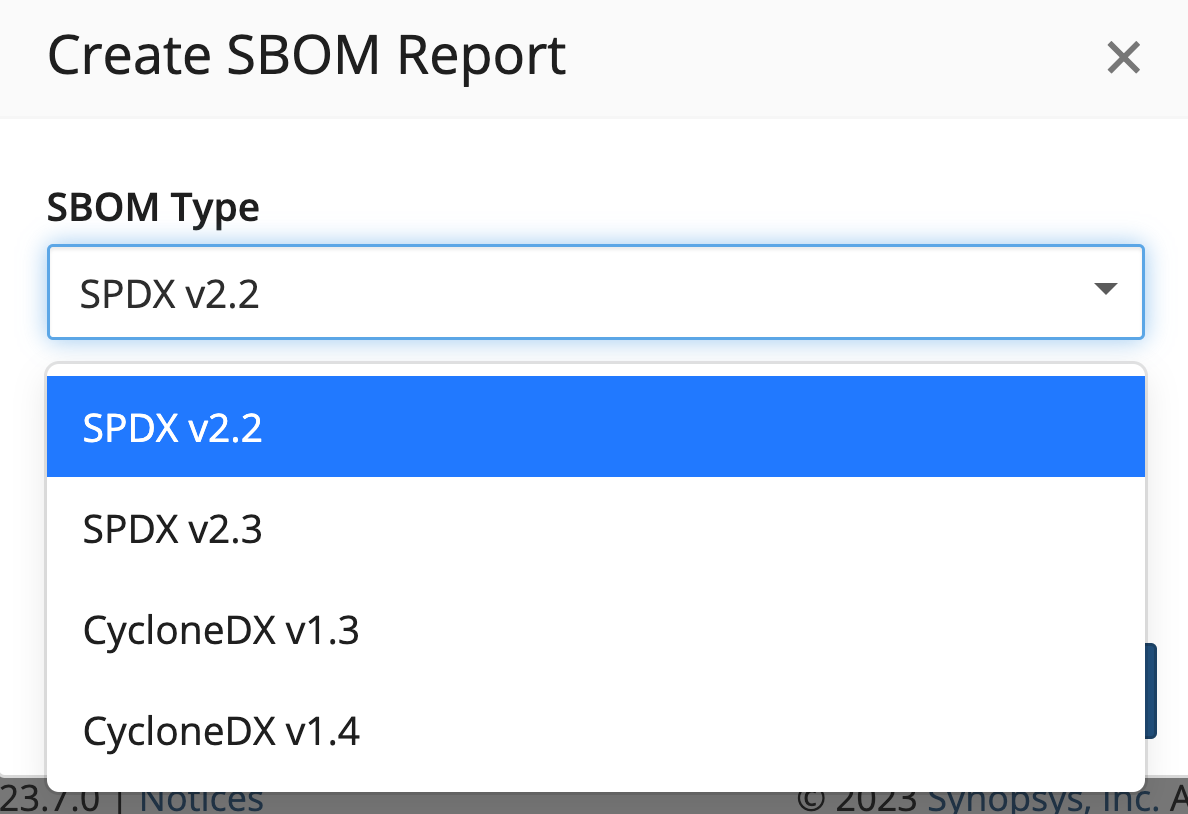

Software Composition Analysis

Track open source dependencies. Manage software supply chain risks.